Updates February 9

PGrid

-

A bunch of work related to migrating off the old GPU tables:

- Ran over 100 GPU variant specs searches. The normalisation functionality didn’t work fully, according to an audit from Codex 5.3. Used Codex 5.3 to create a script to edit/merge problematic GPU variants.

- Moved the Next.js frontend to ClickHouse-only queries for the GPU details tables, bringing it in line with the other category details tables.

- Deleted the classify frontend, which had a backend that used a lot of old GPU tables.

- Ran a smoke test across Australian retailers to make sure GPU scraping worked for generic items.

- Cleaned up sales-checking code which looked for price decreases in GPU tables and sent Discord alerts.

- Deleted ClickHouse integrity-check functions (to test differences between Postgres and ClickHouse queries).

- Ran a local cutover test with two databases: one local, one fake prod. Ran through the cutover procedure on the pretend prod (delete GPU variants, sync new GPU variants and classifications). Also ran through eBay and Amazon generic scraping for GPUs and it was OK. Ran a full sync on local ClickHouse and tested the local Next.js frontend. Only minor issue: some differences in price-history graphs due to incomplete classification coverage on the new version.

- Fixed an issue with the classification pipeline where we didn’t delete specs search rows on a reclassify decision by a classification AI (for all categories except RAM). Fixed this in a shared util.

- Added error logging for specs search if the same item keeps getting requeued.

- Biggest issue with the new GPU classification has been the classification AI choosing to specs-search items instead of classifying them (lack of information in the title). What ended up working was to run specs search anyway, but wire it up to auto-classify if the specs search returned a variant that already existed in the database.

-

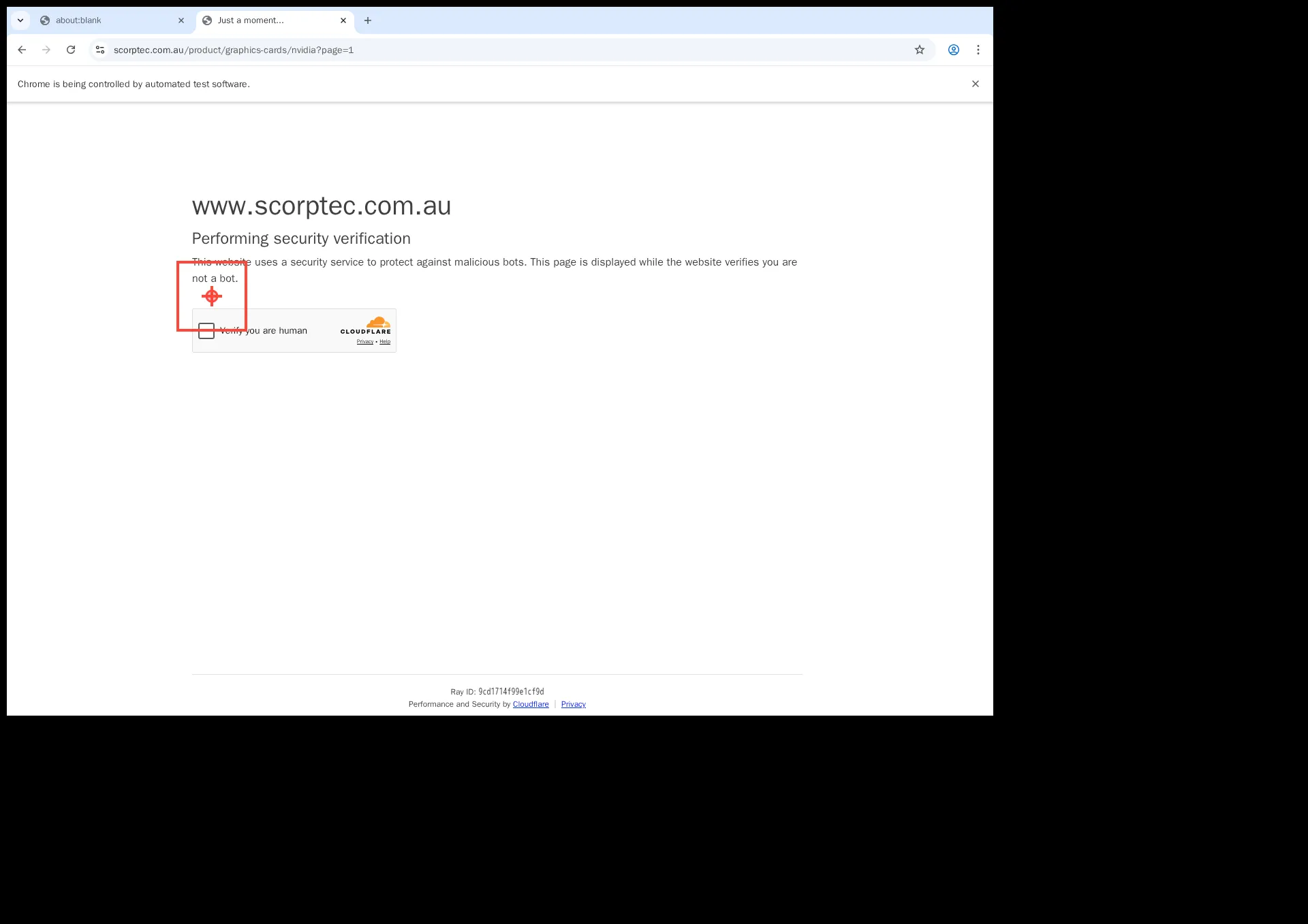

Cloudflare bypass was failing:

- Added a click marker for screenshots to debug.

- Fixed the coordinates:

-

Installed OpenClaw on the weekend and played around with it a bit. Updated internal MCP tools to allow querying both ClickHouse and Postgres. Will play around with this to try to get agents to generate content.